This started with a tweet. I’m embarrassed how often that happens.

Frustrated by a sense of global mispriorities, I blurted out some snarky and mildly regrettable tweets on the lack of attention to climate change in the tech industry (Twitter being a sublime medium for the snarky and regrettable). Climate change is the problem of our time, it’s everyone’s problem, and most of our problem-solvers are assuming that someone else will solve it.

I’m grateful to one problem-solver, who wrote to ask for specifics —

The notes below are my attempt to answer that question.

This is a “personal view”, biased by my experiences and idiosyncrasies. I’ve followed the climate situation for some time, including working on Al Gore’s book Our Choice, but I can’t hope to convey the full picture — just a sliver that’s visible from where I’m standing. I urge you to talk to many scientists and engineers involved in climate analysis and energy, and see for yourself what the needs are and how you can contribute.

This is aimed at people in the tech industry, and is more about what you can do with your career than at a hackathon. I’m not going to discuss policy and regulation, although they’re no less important than technological innovation. A good way to think about it, via Saul Griffith, is that it’s the role of technologists to create options for policy-makers.

I’m also only going to directly discuss technology related to the primary cause of climate change (the burning of fossil fuels), although there are technological needs related to other causes (livestock, deforestation, global poverty), as well as mitigating symptoms of climate change (droughts and storms, ecosystem damage, mass migrations).

I won’t say much about this, but I can’t leave it out. Available funding sources, and the interests and attitudes of the funders, have always had an enormous effect on what technology comes to exist in the world. In a time of crisis, it’s the responsibility of those holding the capital to sponsor work on averting the crisis. That is not happening.

Possibly the largest source of public funding is in the form of financing programs, such as loan guarantees and tax credits.

[The U.S. energy loan program] literally kick-started the whole utility-scale photovoltaic industry... The program funded the first of five huge solar projects in the West... Before that, developers couldn’t get money from private lenders. But now, with proven business models, they can. (source)

It’s great that this funding exists, but let’s be clear — it’s a pittance. If we take Saul Griffith’s quote at face value and accept that addressing climate change will take a concerted global effort comparable to World War II, consider that the U.S. spent about $4 trillion in today’s dollars to “fight the enemy” at that time. Our present enemy is more threatening, and our financial commitment to the fight is several orders of magnitude off.

There is... no scenario in which we can avoid wartime levels of spending in the public sector — not if we are serious about preventing catastrophic levels of warming, and minimizing the destructive potential of the coming storms. (source)

In the meantime, the fossil fuel industry is being subsidized at about half a trillion dollars a year.

Public funding for clean energy is a problem to be solved. It’s not a technology problem. But it’s a blocker that prevents us from getting to the technology problems.

You can read the story of the so-called “cleantech bubble”. In brief — between 2008 and 2011, about $15 billion of enthusiastic venture capital went into energy-related startups. There were a few major failures, investors got spooked, and cleantech became taboo.

Late-stage venture funding has dwindled, and early-stage funding has all but disappeared. Founders spend their days scrounging instead of building. Consider the American energy storage company Primus Power, which had to raise its series C from a South African platinum company, and series D from Kazakhstan.

The human race uses 18 terawatts, and will for the foreseeable future. So there are basically only two scenarios for investors as a collective:

(a) invest in clean energy immediately; clean energy takes over the $6 trillion global energy market; investors get a nice piece of that.

(b) don’t invest in clean energy immediately; fossil fuels burn past our carbon budget; investors inherit a cinder.

Scenario (a) seems like the most rational plan for everyone, in the long term. The fact that most investors’ short-term incentives are structured to prefer scenario (b) is a critical problem to be solved. Again, it’s not a technology problem, but it’s a blocker that prevents us from getting to the technology problems.

The primary cause of global warming is the dumping of carbon dioxide into the sky.

The primary cause of that is the burning of coal, natural gas, and petroleum in order to generate electricity and move vehicles around.

In order to stop dumping carbon dioxide into the sky, the world will have to generate its energy “cleanly”. For the purposes of this essay, that will mean mostly via solar and wind, although geothermal, hydroelectric, biomass, and nuclear will all have parts to play.

This is well-known, but the scale and rate of change required is often unappreciated. Saul Griffith has a good bit about this, suggesting that what’s needed is not throwing up a few solar panels, but a major industrial shift comparable to retooling for World War II.

In 1940 through 1942, U.S. war-related industrial production tripled each year. That’s over twice as fast as Moore’s law.

In order to avoid the more catastrophic climate scenarios, global production and adoption of clean energy technology will have to scale at similar rates — but continuously for 15 years or more.

The catalyst for such a scale-up will necessarily be political. But even with political will, it can’t happen without technology that’s capable of scaling, and economically viable at scale.

As technologists, that’s where we come in.

Many people seem to assume that breakthoughs in clean energy will come in the form of new generation technology — fusion reactors, nanoscale solar cells, that sort of thing.

Those will be good things to have! But there’s more than enough power available to today’s solar cells and wind turbines — if only the systems were cheaper, simpler, and scalable. Here are a few examples of the kinds of projects I find interesting:

Makani hoists its wind turbine to high altitudes with a flying wing instead of a tower.

Makani’s energy kite actually operates on the same aerodynamic principles as a conventional wind turbine, but is able to replace tons of steel with lightweight electronics, advanced materials, and smart software. By using a flexible tether, energy kites eliminate 90% of the materials used in conventional wind turbines, resulting in lower costs. (source)

Altaeros uses a blimp.

The Altaeros Buoyant Airborne Turbine reduces the second largest cost of wind energy – the installation and transport cost – by up to 90 percent, through a containerized deployment that does not require a tower, crane, or cement foundation. (source)

Sunfolding does solar tracking with pneumatically-actuated plastic soda bottles.

Actuation and control are the highest cost components of today’s tracking systems. Together they account for nearly 50% of the tracking system cost. We replace both with our new approach to tracker design. (source)

These projects aren’t about better generators. They are about dramatically reducing the cost of the stuff around the generator.

Some of the best news of the last few years is the plunging cost of solar power. It’s instructive to look at what exactly is responsible for the drop. It’s partly cheaper solar panels, due to improved conversion efficiency and falling manufacturing costs. But panels are now so cheap that they only make up 25% of the total system cost.

The majority of the price drop is now due to better inverters, and better mounting racks, and better installation techniques, and better ways for solar companies to interact with customers. There’s innovation everywhere, and you don’t need to be on the photovoltaic manufacturing line in order to play.

The reason that these reductions in system cost are potentially so significant is the tipping point once solar and wind are consistently cheaper than fossil fuels and can be scaled up to meet demand.

My point here is that there are many ways of contributing toward innovation in the production of clean energy without going off and building a fusion reactor. Look at the stuff around the things.

Even for something as physical as power generation, the right software can make a signficant contribution. A few examples that come to mind:

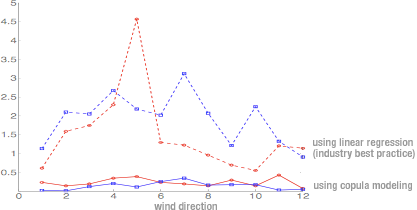

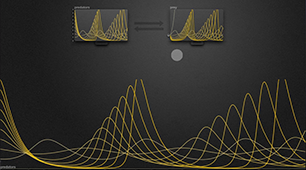

Kalyan Veeramachaneni et al at MIT used modern probabilistic modeling to dramatically improve the process of estimating wind capacity at a location, calculating more accurate predictions in a fraction of the time.

We talked with people in the wind industry, and we found that they were using a very, very simplistic mechanism to estimate the wind resource at a site. (source)

They didn’t invent a windmill; they invented an algorithm to determine where the windmill should go. It’s now a startup.

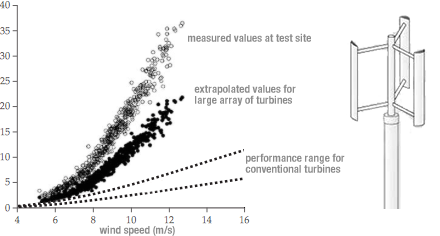

John Dabiri’s team at Caltech used aerodynamic analysis to dramatically improve the energy production and compactness of wind farms. Their work computes the optimal placement of vertical turbines so they reinforce each other instead of interfering, making possible large arrays of small turbines.

This approach dramatically extends the reach of wind energy, as smaller wind turbines can be installed in many places that larger systems cannot, especially in built environments. ... Favorable economics stem from an orders-of-magnitude reduction in the number of components in a new generation of simple, mass-manufacturable (even 3D-printable), vertical-axis wind turbines. (source)

Again, they didn’t invent a new windmill; they used computational modeling to make viable a much smaller and cheaper existing windmill.

Makani and Sunfolding both sprung from Saul Griffith’s Otherlab, and much of Otherlab’s magic lies in their code. A common theme is replacing physical material with dynamic control systems:

[Makani’s kite’s] computer system uses GPS and other sensors along with thousands of real-time calculations to guide the kite to the flight path with the strongest and steadiest winds for maximum energy generation. (source)

The really big themes I’d like to emphasize, because we need more people to join the club, so to speak, is the importance of being able to substitute a control system — sensors and computers — for actual materials... We are actually now replacing atoms with bits. (source)

as well as creating new software tools for design and manufacturing:

Griffith’s team had to write modeling software for the inflatables, because nobody had done anything like it before. But Otherlab creates its own software much of the time anyway. The 123D line of 3D modeling software offered by Autodesk grew out of one of his projects.

“We write all of our own tools, no matter what project we’re building,” Griffith says. “Pretty much anything that we’re doing requires some sort of design tool that didn’t exist before. In fact, the design tools that we write to do the projects that we’re doing are a sort of product in and of themselves.” (source)

My point here is that software isn’t just for drawing pixels and manipulating databases — it underlies a lot of the innovation even in physical technology. More on this below.

I recently visited the California ISO, which orchestrates the power grid to match energy production with consumption in realtime. The ISO exists because every megawatt that is generated at a power plant must be immediately consumed somewhere else. There’s no buffer in the system, aside from a few reservoirs where they can occasionally pump water uphill.

So they’ve evolved a complex apparatus for forecasting energy demand, and sending realtime price signals to producers to make them adjust their output as needed. This is becoming a formidable challenge as more and more intermittent (thus, unpredictable) renewables like solar and wind come online, and dirty and expensive peaker plants have to be ramped up or down on a moment’s notice to balance them out.

Everything about today’s power grid, from this centralized control to the aging machinery performing transmission and distribution, is not suitable for clean energy. The power grid was designed to take input from a handful of tightly-regulated power plants running in synchrony, not millions of solar roofs. And it was designed for power plants that were always available and predictable, not intermittent sources like the sun and wind.

If everyone in Los Angeles put solar panels on their roofs, plugged electric cars into their garages and used smart power-meters today, something interesting would happen. The electric grid would collapse. (source)

In order for clean energy to work, it needs to get from where and when it is generated, to where and when it needed. The “where” and “when” call for networking and storage, respectively.

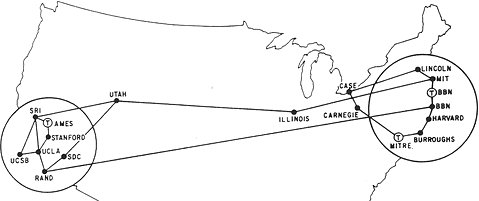

The key idea that made the internet possible was the move from centralized circuit-switched networks to distributed packet-switching protocols, where data could “find its way” from sender to receiver.

Now it’s energy that needs to find its way.

The power network... will undergo the same kind of architectural transformation in the next decades that computing and the communication network has gone through in the last two.

We envision a future network with hundreds of millions of active endpoints. These are not merely passive loads as are most endpoints today, but endpoints that may generate, sense, compute, communicate, and actuate. They will create both a severe risk and a tremendous opportunity: an interconnected system of hundreds of millions of distributed energy resources (DERs) introducing rapid, large, and random fluctuations in power supply and demand...

As infrastructure deployment progresses, the new bottleneck will be the need for overarching frameworks, foundational theories, and practical algorithms to manage a fully [data-centric] power network. (source)

This is an algorithms problem! It’s TCP/IP for energy. Think of these algorithms as hybrids of distributed networking protocols and financial trading algorithms — they are routing energy as well as participating in a market.

TCP/IP spawned quite an industry of infrastructure and applications. It’s likely that something similar will happen as the smart grid gets underway. One DOE-funded project attempting to provide the infrastructure for such an industry is VOLTTRON, an “Intelligent Agent Platform for the Smart Grid”.

VOLTTRON is an innovative distributed control and sensing software platform. Its source code has been released, making it possible for researchers and others to use this tool to build applications for more efficiently managing energy use among appliances and devices, including heating, ventilation and air conditioning (HVAC) systems, lighting, electric vehicles and others. (source)

VOLTTRON is not an application such as demand response – demand response can be implemented as an application on top of VOLTTRON.

VOLTTRON is open, flexible and modular, and already benefits from community support and development. (source)

Protocols for moving data around were the big thing for a few decades. Then it was moving money around. The next big thing will be moving energy around.

Relying on sun and wind is only possible if we can store up their energy for when it’s not sunny or windy. Many people assume that we’ll “just use batteries”, but the scale is off by a few orders of magnitude. All the batteries on earth can store less than ten minutes of the world’s energy. At currently anticipated growth rates, we wouldn’t have the batteries we need for eighty years.

Grid-scale energy storage is perhaps the most critical technology problem in clean energy. When I visited the ISO, the operator was just about imploring us to invent better energy storage technologies, because they would change the game entirely.

Tesla recently announced their home battery initiative, and a Gigafactory to produce them.

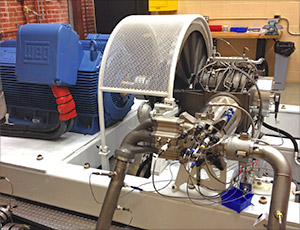

My friend Danielle Fong started LightSail Energy to store energy by compressing air with an engine.

There are various companies pursuing variations on batteries, compressors, flywheels, thermal storage, water pumps, and more.

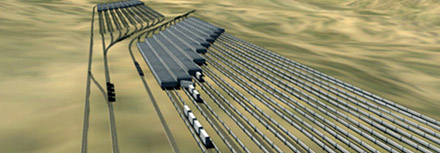

Particularly charming is Advanced Rail Energy Storage, whose proposal is

using lower-cost power to drive a train uphill and then let the train roll downhill to produce power when market prices are high. (source)

There’s also something delightful about the image of CALMAC’s IceBank®, Ice Energy’s Ice Bear®, and Axiom’s Refrigeration Battery™ battling it out in the arena of building-sized ice makers.

IceBank is an air-conditioning solution that makes ice at night [when energy demand is low] to cool buildings during the day. (source)

All this may make energy storage seem like a competitive space. But the reality is that these companies aren’t competing with each other so much as they are competing with peaker plants and cheap fossil fuels. An energy storage startup can be in the strange position of continually adjusting their product and strategy in response to fluctuations in fossil fuel prices.

But as in the case of energy production, there should be a tipping point if storing and reclaiming renewable energy can be made decisively cheaper than generating it from natural gas, and can be scaled up to meet demand.

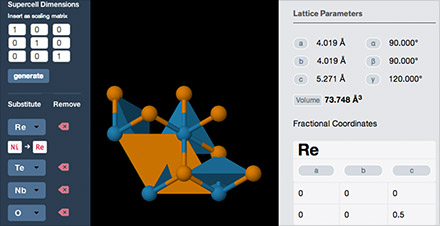

The core technologies in energy storage tend to be physics-based, but software plays essential roles in the form of design tools, simulation tools, and control systems. My favorite example is the inexplicably gorgeous Materials Project, a database and visualization tool for material properties, funded by the Department of Energy’s Vehicle Technologies Program to help invent better batteries.

To the right is how the U.S. currently generates energy. The most conspicuous source of carbon emissions is the thick green bar from “petroleum” to “transportation”. We need to erase that.

While we’re at it, we also ought to erase the thick grey bar from “transportation” to “wasted”.

Passenger vehicles account for the majority of transportation-related greenhouse gas emissions. There are a number of emerging ways to reduce this impact.

Electric vehicles are inevitable. Today, powering a car from the grid might not be much cleaner than burning gasoline. But once the grid cleans up, not only will electric cars be cleaner than gas cars, they may be more efficient than mass transit.

New kinds of powered vehicles are becoming possible which are more nimble and efficient than cars.

Most trips people take are fairly short. It’s not inconceivable that new vehicles could replace cars for most trips if the technology, design, and marketing are right, and the proper infrastructure and laws are in place. And you don’t need to start a car company to work on them.

Coordinated autonomous vehicles could lead to very different ways of moving around people and deliveries. This is basically mobile robotics on a big scale. And again, you don’t necessarily need to go work for a car company in order to make interesting things here.

Reducing the need for personal transportation via urban design. Among other reasons, this is important to counter the likely tendency of autonomous cars to increase urban sprawl, which has been strongly correlated with emissions.

Similar opportunities apply to fleet vehicles. One of the cofounders of Tesla is now in the business of electrifying Fedex vans and garbage trucks. Long-haul trucks are more of a challenge, but there’s room for improvement in fuels, vehicle design, and logistics.

As for airplanes, people might just have to fly less. This will require better tools for remote collaboration, or in Saul Griffith’s words, “Make video conferencing not suck.”

Many home appliances that consume significant electricity — air conditioner, water heater, clothes dryer, electric vehicle charger — have some tolerance in when they actually need to run. You don’t care exactly when your electric car is charging as long as it’s charged in the morning, and you don’t care exactly when your water heater is heating, as long as the tank stays hot.

If your water heater could talk to your neighbor’s water heater and agree to avoid heating water at the same time, it would flatten out the demand curve, and help avoid the ups and downs that must be serviced by peaker plants. The water heater could also work aggressively when solar power was plentiful and hold back when clouds went by, to match the intermittency of renewables and require less energy storage.

The Rocky Mountain Institute calls this “demand flexibility”, and imagines appliances coordinated through price signals.

Demand flexibility uses communication and control technology to shift electricity use across hours of the day while delivering end-use services at the same or better quality but lower cost. It does this by applying automatic control to reshape a customer’s demand profile continuously in ways that either are invisible to or minimally affect the customer. (source)

This sort of coordination is a goal of many of the “smart grid” proposals.

In the 1970s, efficiency pioneer Art Rosenfeld instigated an unprecedented program of energy saving, literally halting the growth of per-capita electricity consumption in California.

A lot of important technology came out of his lab, such as the ballasts that made compact florescent lamps viable, but his two biggest influences were regulatory. Half the energy savings in California came from adjusting the profit structure of power utilities so they could be profitable selling less power.

The other half came from mandating energy usage standards for buildings and appliances. These standards, as well as more recent voluntary programs such as Energy Star, forced companies to compete on efficiency. This drove innovation (and even reduced cost!) far more than simple consumer preference.

Building standards are a particularly interesting case here, because they were, and are, intimately tied to software.

[In 1975] the CEC’s draft “Title 24” residential building standard proposed to limit window area to 15% of wall area, without distinguishing among north, south, east, and west. Indeed I don’t think the standard even mentioned the sun!

I contacted the CEC and discovered why they thought that windows wasted heat in winter and “coolth” in summer. The CEC staff had a choice of only two public-domain computer programs, the “Post Office” program, which was user hostile... and a newer program [NBSLD]... They chose NBSLD, but unfortunately had run it in a “fixed-thermostat” mode that kept the conditioned space at 72°F (22°C) all year...

NBSLD’s author, Tamami Kusuda, had written a “floating-temperature” option, but it was more complicated and still had bugs, and neither Tamami nor anybody at CEC could get it to work satisfactorily. No wonder the CEC concluded that windows wasted energy!

I decided that California needed two programs for energy analysis in buildings: first and immediately, a simple program for the design of single-family dwellings and, second and later, a comprehensive program for the design of large buildings, with a floating-indoor-temperature option and the ability to simulate HVAC distribution systems... (source)

Rosenfeld’s energy-modeling software (which became DOE-2, then EnergyPlus) was quickly and widely adopted for calculating and specifying building performance standards.

Not only does energy simulation software still drive building standards, energy analysis tools such as Green Building Studio and OpenStudio are now an integral part of the building design process. Particularly interesting are tools such as Sefaira and Flux, which give the designer immediate feedback on energy performance as they adjust building parameters in realtime.

If efficiency incentives and tools have been effective for utilities, manufacturers, and designers, what about for end users? One concern I’ve always had is that most people have no idea where their energy goes, so any attempt to conserve is like optimizing a program without a profiler.

Efficiency through analytics has motivated a lot of projects, from the humble and niche Kill-A-Watt,

to the characteristically short-lived Google PowerMeter and Microsoft Hohm services.

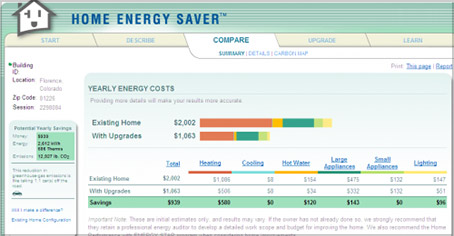

There are online energy calculators, such as Berkeley Lab’s Home Energy Saver,

and energy dashboards are appearing in commercial and institutional buildings.

Many of these projects are unsuccessful and possibly only marginally effective. I don’t think this means that efficiency feedback will always be unsuccessful — just that it needs to be done well, it needs to be actionable, and most importantly, it needs to be targeted at people with the motivation and leverage to take significant action.

Individual consumers and homeowners might not be the best targets. A more promising audience is people who manage large-scale systems and services.

For example, garbage collection. Garbage trucks stop-and-go down every street, in every city, at 3 miles per gallon. The startup Enevo makes sensors which trash collectors install in dumpsters, and provides logistics software that plans an optimal collection route each day. By only picking up full bins, they cut fuel consumption in half.

Enevo works at the system level, not consumer level. There are fewer customers at system-level, but those customers have the motivation and leverage to implement heavy efficiency improvements.

You won’t find programming languages mentioned in an IPCC report. Their influence is indirect; they get taken for granted. But I feel that technical tools are of overwhelming importance, and completely under the radar of most people working to address climate change.

I was hanging out with climate scientists while working on Al Gore’s book, and they mostly did their thing in R. This is typical; most statistical research is done in R. The language seems to inspire a level of vitriol that’s impressive even by programmers’ standards. Even R’s advocates always seem sort of apologetic.

Using R is a bit akin to smoking. The beginning is difficult, one may get headaches and even gag the first few times. But in the long run, it becomes pleasurable and even addictive. Yet, deep down, for those willing to be honest, there is something not fully healthy in it. (source)

Complementary to R is Matlab, the primary language for numerical computing in many scientific and engineering fields. It’s ubiquitous. Matlab has been described as “the PHP of scientific computing”.

MATLAB is not good. Do not use it. (source)

R and Matlab are both forty years old, weighed down with forty years of cruft and bad design decisions. Scientists and engineers use them because they are the vernacular, and there are no better alternatives.

Here’s an opinion you might not hear much — I feel that one effective approach to addressing climate change is contributing to the development of Julia. Julia is a modern technical language, intended to replace Matlab, R, SciPy, and C++ on the scientific workbench. It’s immature right now, but it has beautiful foundations, enthusiastic users, and a lot of potential.

I say this despite the fact that my own work has been in much the opposite direction as Julia. Julia inherits the textual interaction of classic Matlab, SciPy, and other children of the teletype — source code and command lines.

The goal of my own research has been tools where scientists see what they’re doing in realtime, with immediate visual feedback and interactive exploration. I deeply believe that a sea change in invention and discovery is possible, once technologists are working in environments designed around:

ubiquitous visualization and in-context manipulation of the system being studied;

Obviously I think this approach is important, and I urge you to pursue it if it speaks to you.

At the same time, I’m also happy to endorse Julia because, well, it’s just about the only example of well-grounded academic research in technical computing. It’s the craziest thing. I’ve been following the programming language community for a decade, I’ve spoken at SPLASH and POPL and Strange Loop, and it’s only slightly an unfair generalization to say that almost every programming language researcher is working on

(a) languages and methods for software developers,

(b) languages for novices or end-users,

(c) implementation of compilers or runtimes, or

(d) theoretical considerations, often of type systems.

The very concept of a “programming language” originated with languages for scientists — now such languages aren’t even part of the discussion! Yet they remain the tools by which humanity understands the world and builds a better one.

If we can provide our climate scientists and energy engineers with a civilized computing environment, I believe it will make a very significant difference. But not one that is easily visible or measured!

A climate model is software, but it’s a different kind of software than what most developers normally think about. Software development usually means developing software systems — code that does useful work “as itself”, such as apps and servers and games. The purpose of a climate model, on the other hand, is to “be something else” — to simulate a physical system in the world.

A circuit model or mechanical model, these days, is essentially software as well. But again, such a model is not intended to serve as a working software system, but to aid in the design of a working physical system.

Here are a handful of languages intended for modeling, simulating, or designing physical systems:

Languages like these don’t get mentioned at programming language research conferences, or in discussions. They’re typically dismissed as “domain-specific”. This pejorative reflects a peculiar geocentrism of the programming language community, whose “general-purpose languages” such as Java and Python are in fact very much domain-specific — specific to the domain of software development.

Modelica is a programming language, but it is not a language for software development! It’s for developing physical things that affect the physical world — such as the machinery that participates in energy production, energy storage, and energy consumption. Data flow in Modelica works like no general-purpose language in existence, because it’s designed around how things influence things in physics, not on a CPU.

I’ve seen enormous effort expended on languages, tools, and frameworks for software developers. If you believe that language design can significantly affect the quality of software systems, then it should follow that language design can also affect the quality of energy systems. And if the quality of such energy systems will, in turn, affect the livability of our planet, then it’s critical that the language development community give modeling languages the attention they deserve.

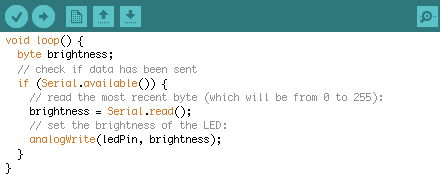

An embedded control system is software, but again, it’s a different kind of software. Conventional software development is about building systems that live in a virtual world of pixels, files, databases, networks. The embedded controller is part of a physical system, sensing and actuating the physical world.

Every modern piece of equipment producing or consuming energy — every wind turbine, battery system, or vehicle — is built around one or more of these control systems.

I started my career designing embedded systems. Standard practice is: you design and perhaps simulate your system in Matlab or Simulink or pencil. You implement it in C. Sometimes C++, sometimes assembly, very occasionally someone will sneak some Forth or Lua in there. But the idea that you might implement a control system in an environment designed for designing control systems — it hasn’t been part of the thinking. This leads to long feedback loops, inflexible designs, and guesswork engineering. But most critically, it denies engineers the sort of exploratory environment that fosters novel ideas.

There exist methodologies with the right intentions — model-based design with generated code seems to be coming into use here and there on industrial projects, for example. But not only are such methods deeply segregated from the mainstream tech community, they are almost antithetical to how today’s programmers are trained to think.

Lessons Learned [from Caterpillar’s pilot project]: Developers who have primarily a control system design background adopt the model-based approach with enthusiasm. Developers with primarily Computer Science backgrounds are uncomfortable with model-based development and require significantly more time and information prior to adopting the methodology. (source)

The success of Arduino has had the perhaps retrograde effect of convincing an entire generation that the way to sense and actuate the physical world is through imperative method calls in C++, shuffling bits and writing to ports, instead of in an environment designed around signal processing, control theory, and rapid and visible exploration. As a result, software engineers find a fluid, responsive programming experience on the screen, and a crude and clumsy programming experience in the world. It’s easier to conjure up virtual fireworks than to blink an LED. So more and more of our engineers have retreated into the screen.

But climate change happens in the physical world. The technology to address it must operate on the physical world. We won’t get that technology without good tools for programming beyond the screen.

The tools discussed so far are for scientists and engineers working on a problem. But let’s back up. How can someone find the right problem to work on in the first place? And how can they evaluate whether they have the right ideas to solve it?

Everyone knows the high-level areas that need work: “clean energy”, “carbon capture”, and so on. But real engineering problems lie in the details — how to quickly estimate wind capacity at a location; how to place windmills without aerodynamic interference; how to store energy in compressed air without losing it through heat.

How do we surface these problems? How can an eager technologist find their way to sub-problems within other people’s projects where they might have a relevant idea? How can they be exposed to process problems common across many projects?

I know a clean-energy inventor who will browse around a treemap of global exports, as a crude way to get a feel for the “space of industry”. She’ll see, for example, “gas turbines”, and get interested in ideas for improved gas turbines. But then what? Where does she go from there?

She wishes she could simply click on “gas turbines”, and explore the space:

What are open problems in the field?

Who’s working on which projects?

What are the fringe ideas?

What are the process bottlenecks?

What dominates cost? What limits adoption?

Why make improvements here? How would the world benefit?

None of this information is at her fingertips. Most isn’t even openly available — companies boast about successes, not roadblocks. For each topic, she would have to spend weeks tracking down and meeting with industry insiders. What she’d like is a tool that lets her skim across entire fields, browsing problems and discovering where she could be most useful.

There are many reasons, of course, why organizations tend not to publicize their problems. But in a planetary crisis, the “secretive competitive company” might not be the ideal organizing structure for human effort. In an admirable gesture of global goodwill, Tesla recently “open-sourced” their patents, but patents represent solutions. What if there were some way Tesla could reveal their open problems?

Suppose my friend uncovers an interesting problem in gas turbines, and comes up with an idea for an improvement. Now what?

Is the improvement significant?

Is the solution technically feasible?

How much would the solution cost to produce?

How much would it need to cost to be viable?

Who would use it? What are their needs?

What metrics are even relevant?

Again, none of this information is at her fingertips, or even accessible. She’d have to spend weeks doing an analysis, tracking down relevant data, getting price quotes, talking to industry insiders.*

What she’d like are tools for quickly estimating the answers to these questions, so she can fluidly explore the space of possibilities and identify ideas that have some hope of being important, feasible, and viable.

* And that’s assuming she can even find the right people to talk to! For some valuable inventions, such as thermal energy storage for supermarkets and electric powertrains for garbage trucks, one wonders how the inventors stumbled upon such a niche application in the first place.

In the case of garbage trucks, the answer is that the technology was developed for a different purpose, and the customer had to figure it out themselves:

FedEx was the first customer, and we did the medium-duty trucks first. Once we got some publicity with that, I got a call from one of the local garbage service providers... “This looks like it would work great for delivery trucks, [but] can you scale that up to my Class 8 garbage trucks? Will that work?” We said, we hadn’t thought of that, but let’s go do the engineering and see if it will. In fact, it does, and it works really well. (source)

Consider the Plethora on-demand manufacturing service, which shows the mechanical designer an instant price quote, directly inside the CAD software, as they design a part in real-time. In what other ways could inventors be given rapid feedback while exploring ideas?

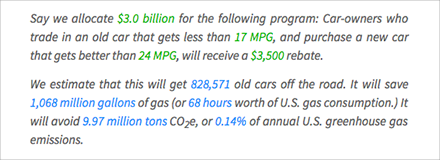

In 2008, economist Alan Blinder published A Modest Proposal: Eco-Friendly Stimulus in the New York Times, proposing a government program to encourage people to scrap their old inefficient cars.

Cash for Clunkers is a generic name for a variety of programs under which the government buys up some of the oldest, most polluting vehicles and scraps them... We can reduce pollution by pulling some of these wrecks off the road. Several pilot programs have found that doing so is a cost-effective way to reduce emissions. (source)

His article inspired the Car Allowance Rebate System, a $3 billion federal program which ran in July 2009. Car owners were offered a $3500 rebate for trading in an inefficient vehicle when purchasing a more efficient one. There was enormous debate, before and after, about what the parameters of the program should be, and whether it would be effective. Proponents claimed:

“Cash for clunkers” is a common-sense proposal that will... reduce the emissions that cause climate change, and make America more energy independent. By helping Americans trade in their old, less fuel efficient cars and trucks for newer, higher mileage vehicles, consumers will save money at the pump, help protect our planet, and create and save jobs for American auto workers. (source)

while critics claimed:

Although the program was originally billed as a way to reduce greenhouse gases, it achieves this aim amid huge expense and massive inefficiencies. Cash for Clunkers may diminish our nation’s overall carbon footprint, but the associated costs will be at least 10 times higher than other carbon-reduction strategies. (source)

Who to believe?

The real question is — why are readers and decision-makers forced to “believe” anything at all? Many claims made during the debate offered no numbers to back them up. Claims with numbers rarely provided context to interpret those numbers. And never were readers shown the calculations behind any numbers. Readers had to make up their minds on the basis of hand-waving, rhetoric, bombast.

Imagine if Blinder’s proposal in the New York Times were written like this:

Say we allocate for the following program: Car-owners who trade in an old car that gets less than , and purchase a new car that gets better than , will receive a rebate.

We estimate that this will get old cars off the road. It will save of gas (or worth of U.S. gas consumption.) It will avoid CO2e, or of annual U.S. greenhouse gas emissions.

The abatement cost is per ton CO2e of federal spending, although it’s per ton CO2e on balance if you account for the money saved by consumers buying less gas.

This passage gives some estimates of what the proposal would actually do. But there’s something more going on. Some numbers above are in green. Drag green numbers to adjust them. (Really, do it!) Notice how the consequences of your adjustments are reflected immediately in the following paragraph. The reader can explore alternative scenarios, understand the tradeoffs involved, and come to an informed conclusion about whether any such proposal could be a good decision.

This is possible because the author is not just publishing words. The author has provided a model — a set of formulas and algorithms that calculate the consequences of a given scenario. Some numbers above are in blue. a blue number to reveal how it was calculated. (“It will save ” is a particularly meaty calculation.) Notice how the model’s assumptions are clearly visible, and can even be adjusted by the reader.

Readers are thus encouraged to examine and critique the model. If they disagree, they can modify it into a competing model with their own preferred assumptions, and use it to argue for their position. Model-driven material can be used as grounds for an informed debate about assumptions and tradeoffs.

Modeling leads naturally from the particular to the general. Instead of seeing an individual proposal as “right or wrong”, “bad or good”, people can see it as one point in a large space of possibilities. By exploring the model, they come to understand the landscape of that space, and are in a position to invent better ideas for all the proposals to come. Model-driven material can serve as a kind of enhanced imagination.

Climate change is a global problem. Discussion and debate will be central to figuring out the best actions to take. We need good tools for imagining, proposing, debating, and understanding these actions.

How do we get public perception and public discussion of energy and climate centered around evidence-grounded models, instead of tips, soundbites, factoids, and emotional rhetoric?

Or — are you really saving the earth by turning off your lights at night? By how much? How do you know?

This is something I struggled with in Ten Brighter Ideas and Explorable Explanations, among other projects.

As with many areas of public interest, the common wisdom surrounding energy conservation consists of myths and legends, rules of thumb and superstitions. We’re given guidelines as soundbites, catchy but insubstantial. We trust them blindly, not knowing whether our actions make any significant impact...

As you explore this document, imagine a world where we expect every claim to be accompanied by an explorable analysis, and every statistic to be linked to a primary source. Imagine collecting data and designing analyses in a collaborative wiki-like manner. (source)

Imagine a concerned citizen who does a web search for "what can I do about climate change". The top two results as I write this are the EPA’s What You Can Do and the David Suzuki Foundation’s Top 10 ways you can stop climate change.

These are lists of proverbs. Little action items, mostly dequantified, entirely decontextualized. How significant is it to “eat wisely” and “trim your waste”? How does it compare to other sources of harm? How does it fit into the big picture? How many people would have to participate in order for there to be appreciable impact? How do you know that these aren’t token actions to assuage guilt?

And why trust them? Their rhetoric is catchy, but so is the horrific “denialist” rhetoric from the Cato Institute and similar. When the discussion is at the level of “trust me, I’m a scientist” and “look at the poor polar bears”, it becomes a matter of emotional appeal and faith, a form of religion.

Climate change is too important for us to operate on faith. Citizens need and deserve reading material which shows context — how significant suggested actions are in the big picture — and which embeds models — formulas and algorithms which calculate that significance, for different scenarios, from primary-source data and explicit assumptions.

Why doesn’t such material exist? Why isn’t every paragraph in the New York Times written like this?

One reason is that the resources for creating this material don’t exist. In order for model-driven material to become the norm, authors will need data, models, tools, and standards.

Ten Brighter Ideas is embarrassingly incomplete. I had to give up after three analyses because it was too painful to find the data I needed. I was spending days desperately typing words into search engines, crawling around government websites, and scrolling through PDFs. (This was before data.gov, which I’ve since found to be more disappointing than useful.)

I assumed the pros had some better process, but later on Our Choice, I found myself watching Al Gore’s research team and climate scientist friends as they typed words into search engines, crawled around government websites, and scrolled through PDFs.*

* Although sometimes they could type words into password-protected search engines to scroll through PDFs that weren’t freely available, despite being products of scholarly research performed with public funds. I don’t have to talk here about the desperate need for open-access scientific publication, right? That’s well-trod ground?

If data is hard to find, models are virtually impossible.

People have figured out these things! The formulas exist in Excel spreadsheets and Matlab code, buried on old hard drives somewhere. Sometimes they’re vaguely described in prose in published papers. But they aren’t accessible — they’re difficult to find, and generally impossible to “grab and use”. The rare author will be able to make up their own models; most authors have no choice but to trust and regurgitate soundbites.

What if there were an “npm” for scientific models?

Suppose there were good access to good data and good models. How would an author write a document incorporating them?

Today, even the most modern writing tools are designed around typing in words, not facts. These tools are suitable for promoting preconceived ideas, but provide no help in ensuring that words reflect reality, or any plausible model of reality. They encourage authors to fool themselves, and fool others.

The first principle is that you must not fool yourself — and you are the easiest person to fool. (source)

Imagine an authoring tool designed for arguing from evidence. I don’t mean merely juxtaposing a document and reference material, but literally “autocompleting” sourced facts directly into the document. Perhaps the tool would have built-in connections to fact databases and model repositories, not unlike the built-in spelling dictionary. What if it were as easy to insert facts, data, and models as it is to insert emoji and cat photos?

Furthermore, the point of embedding a model is that the reader can explore scenarios within the context of the document. This requires tools for authoring “dynamic documents” — documents whose contents change as the reader explores the model. Such tools are pretty much non-existent.

The examples above use Tangle, a little library I made as a bootstrapping step. It’s far from the goal of a real dynamic authoring tool. What might such a tool look like? Some sort of fusion of word processor and spreadsheet? An Inform-like environment for composing dynamic text?

The initial proposals I sketched for Our Choice were interactive graphics with scenario models, where readers could adjust parameters (e.g. pollution rate) and see the estimated effects. The book editors rejected these, immediately.

The reason was that every number in the text required a citation. This was especially a concern for Al Gore, whose readership includes an aggressive contingent meticulously seeking proof of his deceptive fabrications. For the book’s integrity, every number in the book must have appeared previously in a scientific paper. An interactive graphic which generated its own numbers from an algorithm was unpublishable — even if it used the same algorithm that the scientists themselves used.

A commitment to sourcing every fact is laudable, of course. What’s missing is an understanding of citing and publishing models, not just the data derived from those models. This is a new problem — older media couldn’t generate data on their own. Authors will need to figure out journalistic standards around model citation, and readers will need to become literate in how to “read” a model and understand its limitations.

I didn’t mention nuclear because I don’t know much about it. See Stewart Brand’s book Whole Earth Discipline for an optimistic take from a recent convert.

“Geoengineering” refers to “the deliberate large-scale intervention in the earth’s natural systems to counteract climate change”. The topic was taboo for many years, and it remains “controversial” (a euphemism for “socially risky to advocate”). As a result, the amount of research into geoengineering is almost negligible compared to R&D on decarbonization.

This is unfortunate, because if total global decarbonization by 2050 doesn’t succeed, we’ll be desperately wishing for some well-developed backup plans. And even if, by some miracle, it does, we’ll still have 450-500 ppm of carbon in the atmosphere, not going anywhere, still wreaking havoc.

Geoengineering is dicey because earth systems are complex and poorly understood, and interventions have unforseen consequences. To me, this is a clear and urgent call for much better tools for understanding complex systems and forseeing consequences.

There is almost certainly some set of things we can do at planet-scale that would be effective and safe. It is almost certainly the case that the best options are ideas that no one has thought of yet. Who will make the tools to enable people to conceive and verify these ideas?

Fears of geoengineering play into the narrative of a people done in by their hubris. But we’re past that point; we had the hubris and it did us in. Human beings now dominate the planet, and it’s folly to pretend that merely “minimizing our impact” will reverse the damage.

In 1968, Stewart Brand began the Whole Earth Catalog with the quip, “We are as gods, and might as well get good at it.” In 2009, his Whole Earth Discipline upped the urgency: “We are as gods, and have to get good at it.”

If we must be gods, we should at least be cautious and well-informed gods, with the best possible tools for seeing, understanding, and debating our interventions, and the best possible meta-tools for improving those tools.

The inventors of the integrated circuit were not thinking about how to preserve the environment. Neither were the founders of the internet. (Maybe except one.) Today, it’s nearly impossible to imagine addressing global problems (or even reading this essay) without all the tools that have been built upon this technology.

Foundational technology appears essential only in retrospect. Looking forward, these things have the character of “unknown unknowns” — they are rarely sought out (or funded!) as a solution to any specific problem. They appear out of the blue, initially seem niche, and eventually become relevant to everything.

They may be hard to predict, but they have some common characteristics. One is that they scale well. Integrated circuits and the internet both scaled their “basic idea” from a dozen elements to a billion. Another is that they are purpose-agnostic. They are “material” or “infrastructure”, not applications.

I have some candidates in mind; here’s one I’d put money on — Van Jacobson’s new internet protocol is a really big deal. I realize it sounds like overreach to claim that “Named Data Networking” could be fundamental to addressing climate change — that’s my point — but my thought is, how could it not?

Climate change, closely understood, is terrifying. The natural human response to such a bleak situation is despair.

But despair is not useful. Despair is paralysis, and there’s work to be done.

I feel that this is an essential psychological problem to be solved, and one that I’ve never seen mentioned: How do we create the conditions under which scientists and engineers can do great creative work without succumbing to despair? And how can new people be encouraged to take up the problem, when their instinct is to turn away and work on something that lets them sleep at night?

Crisis can be motivating for some people in some circumstances — Rad Lab, Manhattan Project, etc. — and there are clearly some people who draw motivation from the climate crisis. But as George Marshall has explored in depth, most people can’t handle it, and need to put it out of their minds.

There are other fields of human endeavor in which morale must be managed quite directly, and they’ve had millenia to develop techniques for “rallying the troops” or “uplifting the congregation”. I wonder if we can learn from them. For both the climate worker and the soldier, the likelihood of imminent annihilation is a distraction, and they must be given a frame of mind that lets them concentrate on the work to be done.

Mikey Dickerson, an engineer who left Google to head up the heroic rescue of healthcare.gov, concluded a recent talk with a plea to the tech crowd:

This is real life. This is your country... Our country is a place where we allocate our resources through the collective decisions that all of us make... We allocate our resources to the point where we have thousands of engineers working on things like picture-sharing apps, when we’ve already got dozens of picture-sharing apps.

We do not allocate anybody to problems like [identity theft of kids in foster care, food stamp distribution, the immigration process, federal pensions, the VA]... This is just a handful of things that I’ve been asked to staff in the last week or so and do not have adequate staff to do...

These are all problems that need the attention of people like you. (source)

100,000 people received an engineering bachelor’s degree in the U.S. last year. There are at least 100,000 people, every year, looking for an engineering problem to solve. I have my own plea to all such people —

One sometimes gets the feeling, as Ian Bogost put it, of rearranging app icons on the Titanic. I think the tech community can do better than that. You can do better than that.

Climate change is the problem of our time. It’s everyone’s problem, but it’s our responsibility — as people with the incomparable leverage of being able to work magic through technology.

In this essay, I’ve tried to sketch out a map of where such magic is needed — systems for producing, moving, and consuming clean energy; tools for building them; media for understanding what needs to be built. There are opportunities everywhere. Let’s get to work.